Reinforcement Learning with Human Feedback (RLHF).

RHFL that is Reinforcement Learning and human feedback uses human feedback to optimize the Machine Learning model more accurately which makes the self learn very efficient. RHFL used for the generative AI application, including Large Language Models.

RHFL usually used in NLP tasks. But it is also prominently used in other generative ai applications. No matter the given application, the ultimate goal of AI is to mimic human behaviors, and decision-making. The machine learning model must encode human input as training data so that the AI can mimics humans more closely while completing hard tasks.

Maximize Model Performance

Introduce more complex parameters.

Enhance user satisfaction with software

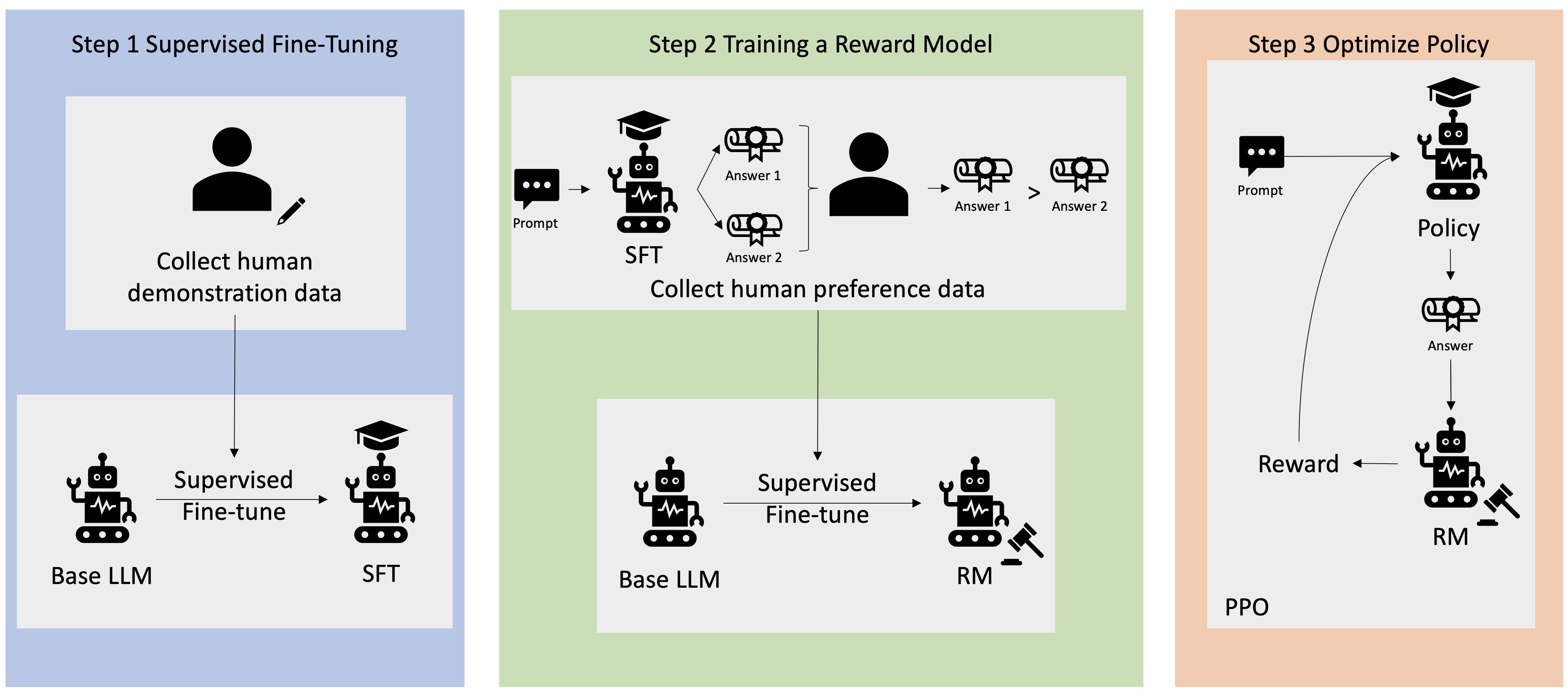

There are five stages before RHFL model

Data Collection with primary or secondary data

Data Preprocessing

Supervised fine-tuning of a Natural language model

Building a separate reward model

Optimize the language model with the reward-based model

It has many applications in field of generative AI.

RLHF is one of the backbones of modern generative AI tools, like ChatGPT and GPT-4 applications. It is also used in Chatbots, Robotics and Gaming.